Win or Learn: Growth Engineering at Airtable

- June 22, 2022

- 21 min read

A conversation with Wendy Lu

We’re honored that Airtable’s Head of Growth Engineering, Wendy Lu, agreed to be THE inaugural growth engineering expert participating in the series (nobody can take that away from you Wendy!). Wendy has amassed a wealth of knowledge and experience both as an early practitioner of growth engineering during Pinterest’s dramatic growth stages and as the first Head of Growth Engineering at rapidly growing B2B SaaS startup Airtable where she has been building the growth engineering team from scratch since 2020.

Here are our top takeaways from the conversation:

- Core product teams are focused on building new power into the product, growth is focused on helping users find the most value from existing power. Proactive communication and structure can help both teams avoid conflicts and benefit greatly from each other’s expertise.

- There’s not a single archetype for a growth engineer, the work spans from the very business and product-oriented to deeply technical systems and infrastructure work.

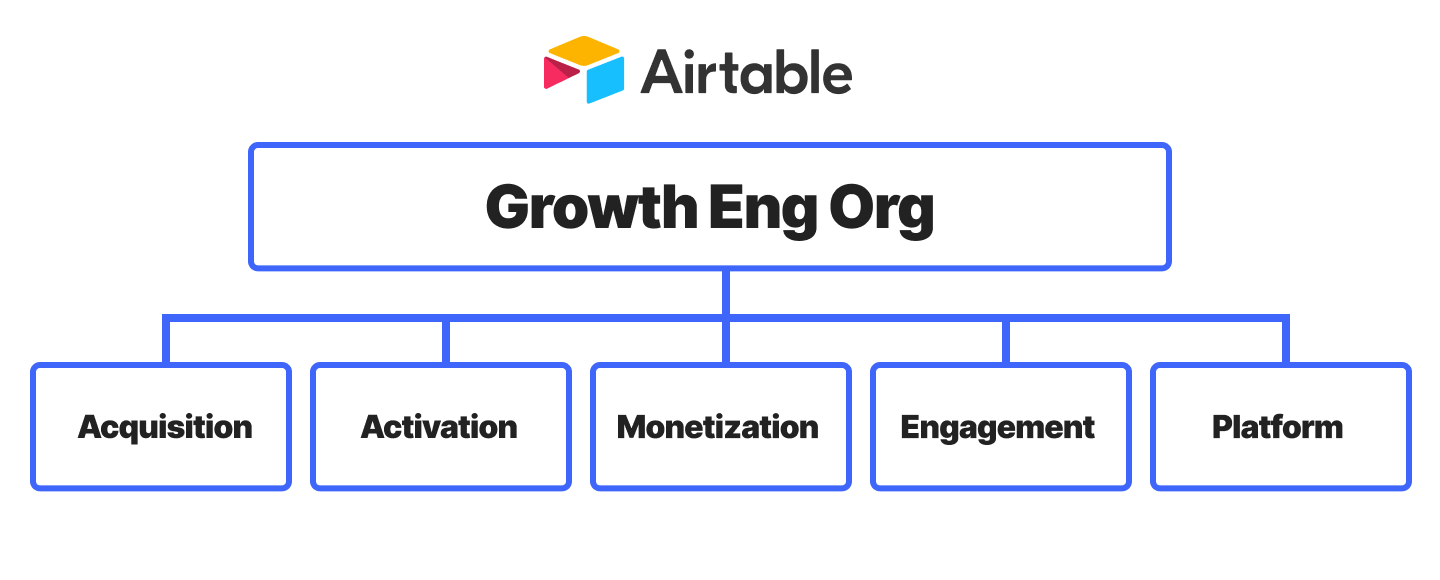

- Airtable organizes their growth teams by stages of the user funnel with a supporting team focused on growth platform. Smart investments in growth platform can allow teams to have more shots on goal and increase their rate of learning, making it easier to hit the business’ goals.

- Experimenting in B2B SaaS (especially down funnel) can take weeks or months to complete. Batching together changes or leveraging user research in lieu of an experiment can help with small changes unlikely to reach the minimum detectable effect.

- No project is a failure as long as you’re learning from it and using it to move forward. Leaders need to proactively establish this culture by recognizing the team for learning that occurs when a bet doesn’t pan out.

Meet Wendy

Alon:

Hey Wendy, thanks for joining us! Wendy is the head of growth engineering at Airtable, and she’s joining Switchboard today to chat about her experience as a growth engineering leader. How are you doing today?

Wendy:

Hey Alon, I’m great. Thanks for having me!

Alon:

To start out, I would be really curious to hear how you found your way into growth engineering. What initially drew you into it?

Wendy:

I started with growth engineering more on the consumer side.

Before my current role, I was at Pinterest for around eight years. For the first four years, I was an engineer. I worked across all aspects: mobile, backend, growth, ads, API, data, pretty much the whole stack. I worked on growth in my second and third year at the company.

What drew me toward it…I don’t think it was so much a draw versus a company need at that time where we had built out such a great product and a very sticky product, and we really needed to get more users registered and more users active on the product. So that was kind of the draw for me.

Personally, I’ve done a lot of different types of engineering in the past, I think sometimes people think growth is this amorphous thing that only certain types of engineers can do and there’s only so much you can do within growth—I actually haven’t found that to be the case.

I think growth engineering is engineering, so to speak. Obviously there’s special domain expertise that’s very useful, but I do think that in terms of the actual “engineering” piece, there are still a lot of the same challenges that we look at in other engineering teams.

What is growth engineering?

Alon:

What is growth engineering to you? When you think about your purview and what you are responsible for at the company, what fits into that?

Wendy:

I see growth engineering as responsible for a company’s business growth goals, whether that’s user growth, whether it’s revenue, or something else. Growth should be the team working toward that as their north star. It’s heavily metrics focused and the Growth team generally uses metrics to prioritize the most important aspects to work on.

Core product teams are building new power into the product, essentially raising the ceiling of what you can do with the product, and growth teams, their job is focused on helping users find the most value from existing power.

Whereas a core product team might have an idea for a feature, they build it, and never really measure the results, Growth uses metrics at all stages. We use metrics to prioritize what we build, we use metrics to say “what do we build first?”, and then we use metrics to measure what we build after we launch it and determine whether something should launch or not.

So that’s one way to think about it. I think another way to think about it is that core product teams are building new power into the product, essentially raising the ceiling of what you can do with the product, and growth teams, their job is focused on helping users find the most value from existing power.

Growth engineering org structure

Alon:

I love that definition! We’ve talked to over 250 folks in growth, both engineering and product roles since we started Switchboard and have seen a ton of variability and consternation about where growth fits into the org—who owns the website? Business platform? Do core product teams own adoption or activation for their products if it’s a multi-product company? I’m curious, coming from Pinterest and now being at Airtable, how do you think about the scope of growth?

Wendy:

I think first of all, a lot of what you’re seeing is probably just the result of B2B growth being a newer art. On the consumer side, we have a lot of really large, really successful companies to model off of, Netflix, Facebook, Pinterest, et cetera…

In B2B product-led growth there have only been so many companies that have really had Growth teams, and if you look at companies over a $10 billion market cap, there are only really a handful.

For B2B products often you’re activating people on entire workflows. They really have to internalize what they’re doing and often it takes more than just a single session to activate them.

So we’re still in early days. I would say all B2B companies with a growth team are probably trying to figure that out together—which is why there are not a lot of set models for how to organize a growth team and I think that’s actually really, really special and really, really fun. Because it’s something we’re all working through together. I often chat with Growth leaders at other B2B companies and we share a lot of the same challenges—it’s interesting to hear different viewpoints and perspectives.

In terms of how our teams are organized at Airtable. Currently we have four teams focused on areas of our user funnel and then we have one team that’s more of a growth platform team.

So our four teams are

- User acquisition, which owns new user sign-ups. “How do we get more registered users on the platform?” Driving things like traffic, driving signup, also driving a lot of virality. They actually work very deeply in the product itself. Sometimes acquisition teams work primarily at the top of the funnel or on the un-authed surfaces, but our acquisition team works very deeply in the product to build viral features and viral loops.

- The second team is user activation. This team is focused on onboarding, “how do we teach people how to use the product? How do we make them really successful essentially in their first, 30 days or so on Airtable?” Airtable is a very, very complex product. When I worked at Pinterest on growth, it was very much like, “Hey, we just got to get people to pin three things and then we’re good” and just reduce friction to doing that. For B2B products often you’re activating people on entire workflows. They really have to internalize what they’re doing and often it takes more than just a single session to activate them. We could be teaching users over the course of months how to use Airtable and gradually exposing them to more and more advanced feature sets.

- The third is our monetization team. This is our team essentially responsible for all of revenue, things like pricing and packaging, trial, conversion, upgrades—how do we get more free users into paid, and then how do we retain those paid users, preventing churn on our paid plans over time

- Then we have this team that you can think of as an engagement team. Many of our enterprise users start as self-serve and then start paying us and then eventually some percentage will graduate to enterprise. In order to make that graduation happen, we found that we need really engaged teams that are active in coming back to the platform. So this last team’s responsibility is how do we create those really, really engaged teams that become good leads for our sales teams to then convert to enterprise.

So those are the four funnel teams and then we have again one team focused on our platform layer. This team right now is very focused on our notifications platform—creating a platform to orchestrate and manage notifications that we send across email, in-app notifications, mobile push, et cetera, and really creating a powerful technical platform that other feature teams can use.

Alon:

How did that evolve over time? How has the shape of the teams or the way they’re structured evolved since you’ve been at Airtable?

Wendy:

When I joined Airtable, we were only three engineers on growth. At the end of the year, I think we’ll be, I won’t say how many, but many, many multiples of that.

So naturally we’ve had to evolve a lot. This is the structure that we’ve built over the past two years as the team has grown. Something interesting is that in the early days of Airtable growth, we were under more of a GM model. We all reported into a head of growth; on engineering, product, design, as well as email marketing.

Over time we’ve we found that it’s helpful to have the different functions co-located. So engineering is reporting into engineering, product into product, et cetera. I think the GM model works really well when you’re starting a growth team because the team can move fast in a very aligned way.

But then, at some point, there is a benefit to splitting the teams out and using the systems that have been developed for the respective functions.

The need for a growth platform

Alon:

One of the topics I want to double click on is growth platform. I think a lot of people don’t realize that at scale, a lot of growth engineering is focused on building systems and platforms that are necessary to drive growth across the entire organization and to equip other teams to be able to contribute. Phil, one of the co-founders at Switchboard was leading growth design at Dropbox, and their growth platform had tens of engineers working on it.

As you’ve grown you’ve broken the teams out by stages of funnel and you’re starting to invest in growth platform right now. How have you thought about platform versus the funnel teams and the balance of time and energy that you’re putting towards one versus the other?

Wendy:

I would say on the growth platform side, we are still very early in building up a team around this. In terms of your question on balance, I think there’s no real magic ratio. I would say I’ve found there’s always a question of “how much time do we spend on new feature development versus foundational work?”

…the quicker a growth team can iterate and learn the more successful they’re going to be, just because they’re going to have more shots on goal and they’re going to increase their rate of learning.

More and more in engineering, I found that there’s no “right” answer to that. You really, really have to listen to the teams, look at how long they’re spending on building things, talk to the team about tech debt and how they can move faster, and then make the call on which way you swing that pendulum as an engineering leader.

I would also say the quicker a growth team can iterate and learn the more successful they’re going to be, just because they’re going to have more shots on goal and they’re going to increase their rate of learning. So if you can identify those few things that really allow your growth team to exponentially speed up how quickly they’re getting features out the door then that’s always going to be better for the team to reach their business goals over time.

For example at Pinterest, we had a platform that actually allowed you to run copy experiments, very simple things. Usually, you have maybe two or three variants and then you look at it after a week, and then you make a call. But Pinterest actually said “hey, why do we even have to involve humans in this process? Why can’t the tool automatically find the best copy and then ship it to users?” We built a system where you could put in as many variants as you wanted and it would run this multi-armed bandit approach and eventually centralized on the best variant. We didn’t even have to have anyone involved in shipping. So that was a big win for the team—how do you focus engineers on the most important things versus all of the manual things that you could abstract away?

Growth at B2B SaaS vs consumer companies

Alon:

Pinterest is kind of the Goliath in the growth engineering community. At the scale that you all were at and the sophistication of the growth platform that you were just talking about it’s really at the far end of the spectrum.

I’m curious in comparison to Airtable, being a B2B SaaS company with many users itself but fundamentally a different kind of product, what learnings have you been able to carry over from your time at Pinterest? What’s the same about both experiences and what’s different?

Wendy:

I think mapping out the funnel is very similar. It’s still user acquisition, onboarding, though monetization is when it starts to diverge quite a bit. For consumer companies, monetization tends to be in a totally different org versus B2B companies.

Other things that are similar: our experimentation tactics, how you run experiments, the types of platforms you need.

In terms of differences: I think the collaboration with sales and marketing is definitely something that is very, very important in a B2B business. Oftentimes in B2B, marketing has very real needs on the website or on marketing emails. All of that needs to be a very tight collaboration between the product team and the marketing team. So that’s something I found that was very, very different.

Similarly with sales, I told you that one of my team’s explicit jobs is to drive leads that our enterprise team can then go and talk to right away and have that initial conversation with. That’s a very different motion than anything that would happen in consumer.

Another difference I would say is just the data available. B2B companies tend to be smaller scale. The total addressable market is often smaller for any B2B SaaS tool versus a consumer tool, just by nature of the product. But the intent is higher when someone’s signing up for a SaaS product they probably put some thought into it and are not just looking for entertainment or someone bored downloading a new consumer app. So I think that the type of user psychology tends to be pretty different.

At a company like Airtable, or any B2B company, if you’re optimizing for a metric that is very down funnel it might take you weeks or even months to get enough data to validate your hypothesis.

I think that there are a few things that really surprised me when going to the B2B world. At Pinterest, there would be times where we would say. “Hey, we have this idea, let’s just go test it.” We could do some analysis to validate it, but let’s just test it and wait a week and look at the results—that was pretty easy. At a company like Airtable, or any B2B company, if you’re optimizing for a metric that is very down funnel it might take you weeks or even months to get enough data to validate your hypothesis.

We’ve tried to get around that in different ways. The first is just taking bigger swings. Making sure that whatever we build, we feel like it’s the best thing that can ultimately move the metric. And the second is doing more upfront work. We do a lot of upfront data validation, user research, et cetera, so that when we build and run something we can be more and more confident that this is the end variant that we want to launch.

Experimentation at B2B SaaS

Alon:

That last point about experimentation really resonates with my own experiences. At Productboard, and from talking to other folks at B2B SaaS companies, I’ve found that the volumes of users down funnel are just much, much smaller. A lot that’s been written about how teams should think about experimentation grew up in consumer-land where you could experiment almost at any place in the product because you had the user volumes to support it.

How has Airtable’s approach to experimentation changed over time? I know that Airtable now actually does have significant user volumes, but it’s still a B2B SaaS product and kind of constrained by some of those same issues that I’ve seen elsewhere.

Wendy:

Yeah. So I think first of all, we’re lucky because I think we have some of probably the largest volumes in B2B SaaS. So we are still able to, in some parts of our funnel, run experiments fairly quickly. I would say how it’s changed is that we don’t need to run an experiment for every single thing.

I think we do heavily use A/B experimentation, but also we found that “hey, there’s a small change and it’s unlikely to have the large minimum detectable effect and we’re unlikely to be able to get statsig signal on it”—we just ship it.

I think we do heavily use A/B experimentation, but also we found that “hey, there’s a small change and it’s unlikely to have the large minimum detectable effect and we’re unlikely to be able to get statsig signal on it”—we just ship it. And then, obviously if we’re confident that this is a positive for the user experience, we should be comfortable shipping even without that validation of results. As long as we know we’re not hurting anything.

So our bet is that if we ship 10 of these little things over time, we should see that metric move. Even if we can’t detect it for every little thing. The other approach, you can also bundle these little changes together and run one big experiment and hope that you can detect that effect. However, that does come at a cost—you are kind of holding back features until you have that big bundle of things to watch. So there are definitely, definitely trade-offs.

Alon:

And on platform side, or at least in support of the experimentation work, any investments that you’ve made as experimentation has scaled up at Airtable that have paid off well? Or any other advice you’d have for other managers or leaders that are maybe earlier in the process and are starting to think about experimentation in a B2B SaaS company?

Wendy:

Definitely. When we grew from three to thirty engineers one of the things we found was that you can’t have a data analyst analyzing every single experiment. That’s not going to scale. We’re still working on this effort, but our goal is to enable folks within the organization to be able to be fluent with data to the point where our data analysts are not spending the majority of their time on experiment analysis. They’re actually looking a quarter ahead and doing more strategic work to tee up our themes for the next quarter instead of just being reactive to experiments every week. So that is a consistent thing we have to keep top of mind and we’ve done that by having the data analysts give trainings to their teams on how to use their data sources, how to use the tables, so that people are fluent and can do the majority of analyses themselves. Building out templates so that engineers can just plug in a name of an experiment and then get at least our baseline metrics—the ones we always care about.

Sometimes for a deeper analysis we might need to pull in the data team at that point, but at least having that baseline of ”hey, let’s get the best effort template out there so that teams can be self-sufficient.” Those are definitely some things that have worked well as we’ve grown.

What makes a successful growth engineer?

Alon:

Any advice you have for folks that are getting started with growth and thinking about how to build their growth engineering orgs?

Wendy:

Yeah, of course. First of all, it is a collaboration. A lot of growth is how you understand the business and how you understand the user.

You can be a year or two out of college and as long as you’re awesome at finding opportunities and really user focused, you can find things that make a great impact. On the other hand, you also have super deep technical systems and challenges that are infrastructure level.

As I mentioned, I think there’s not really one profile of growth engineer. A lot of times people think it’s like “hey, people who want to update the color of a button” or do those types of minor UX optimizations. But I found that, honestly, as growth teams scale there are a ton of challenges both on the product side and the technical side. How do you teach people how to use a complex product on the order of months?

That’s a very, very hard problem—to add and build out the frameworks and the user interfaces for that. And you also have systems to deliver millions of notifications a day. Or how do you recommend people the freshest content in fractions of a second. Those are all super deep engineering challenges and those are all things that growth teams probably will take on at some point.

I think there’s not really one profile of growth engineer and I think that what’s so great about growth is that anyone can be great at it. You can be a year or two out of college and as long as you’re awesome at finding opportunities and really user focused, you can find things that make a great impact. On the other hand, you also have super deep technical systems and challenges that are infrastructure level. So I think there’s not one archetype of a growth engineer. There are many, many different types of people and expertise needed to make a growth team work.

Alon:

That’s really interesting, I think definitely one of the things that we’ve found when talking to growth engineers is the product-mindedness that comes across.

When you think back to the earliest days of the team, when you all were just three engineers, what attributes would you be looking for? Did folks coming in need more generalist skill sets when the team was smaller? Does our experience with product-mindedness resonate with you? Are there other things that set people up for success?

Wendy:

Yeah, product-mindedness resonates with me a lot.

I think the speed of iteration in growth is just so quick that you can’t have a PM teeing up a product for a month and then an eng is set for three months. Often these cycles are weeks or days even. The more that you can have folks who are flexible and can find opportunities when needed, build when needed, fill in for PM when needed, I think the faster you’re going to be able to just execute and move with higher velocity.

…a growth engineer should not be afraid to throw away code or a feature that doesn’t work. At the end of the day, they’re oriented toward the business impact and not married to the technology.

I think it’s also really important from a management perspective, that managers encourage those types of frameworks within their team, because with such a high velocity and iteration speed it’s going to be very hard to plan out every single: “this person is working on this for this month, this person is working on this for this month”. You really need to enable the teams to be able to function independently and find those opportunities and move forward. So that is definitely something we’d look for.

Second is orientation towards an impact. I always say that a growth engineer should not be afraid to throw away code or a feature that doesn’t work. At the end of the day, they’re oriented toward the business impact and not married to the technology.

I think that’s an interesting thing, having that higher level of company mindset.

Building a growth culture

Alon:

Curious how you’ve coached folks on the team. I presume that there can sometimes be tension between things that people have built and a desire to see things through, and some of the necessities of the business. Has that come up? How have you talked to your team about that? How do you approach those situations?

Wendy:

I would say, first of all, the higher the hit rate we could have, the better. So in terms of coaching the team, I always encourage them to, especially in a B2B world where we don’t have the luxury of running experiments as quickly, do upfront validation to make sure that they’re working on the most important thing. For example, if you’re going to spend two months building out an engagement email, make sure that the reach of that engagement email is the widest it can be. You never want to do that and find that this actually only works for 3% of users. I definitely encourage folks to make sure that their investments are sound upfront and hopefully that can avoid a lot of the pain of throwing away something that you’ve worked really hard on.

One of my favorite sayings is “Win or Learn”, which means that obviously, we play to win. We have ambitious goals as a company. But no project is a failure, as long as you’re learning from it and using that to move forward.

Secondly, again, with the iterative approach—if you can do something quick to validate that this actually works and then do the majority of the hard technical work, that’s always better. If you can do a prototype and in one or two weeks launch it into the wild, get some early data, and then iterate I think that’s always going to be a positive. Obviously you can’t do it for every experiment, but when you can, definitely encourage folks to do so.

And then the third thing is just a cultural aspect. One of my favorite sayings is “Win or Learn”, which means that obviously, we play to win. We have ambitious goals as a company. But no project is a failure, as long as you’re learning from it and using that to move forward.

So really celebrating not only the metrics wins, but also things that drop metrics or things that didn’t go well but we learned a lot from and that we can use to inform what we build in the future. I think a big failure mode for growth teams is if people are only sharing out wins and not sharing out their learnings. You get into this cycle where those really, really important learnings are not really being distributed throughout the organization and that’s going to slow you down over time.

Alon:

I like that framing a lot. Are there tactics or things that you’ve seen work well to socialize those learnings or get people to engage with them? Or allow others outside of the growth teams to take something away that can impact their work?

Wendy:

That’s a great question. I think one thing that I found is very helpful is that it starts with the leaders of the team. If managers are doing this and recognizing folks for these learnings, even when the metrics are not a win, that’s always helpful. If the role models and the most senior engineers in the organization are also doing that, then everyone else will see that and know that, “hey, this is a great thing. It’s okay to do this”.

Cross-functional ownership and alignment

Alon:

Something you’ve brought up before, and something that we’ve heard given the nature of growth work (e.g. the speed of iteration and the business impact orientation) is that the style can be different and it can create overlaps with either core product teams or other functions like marketing with respect to ownership over certain areas or metrics and goals.

Something that I’ve run into in my own career is with those misalignments across different functions—growth often feels the burden of that first and most directly. I’m curious how you’ve navigated that, or what you’ve found the most effective way of navigating that collaboration between the growth org and other groups in the companies?

Wendy:

I think I’ve been lucky to be at companies with a really strong emphasis on company orientation. I think that the ownership piece actually doesn’t come up as much as I would expect given that, similar to a lot of companies at early stage or mid stage, there’s just so much to do.

There’s no time to think about ownership. It’s like “hey, there’s so much to be fixed so let’s have teams do what they think is the most important and then only when there are actual overlaps, we can go and resolve those.” But a lot of times, I want to avoid over optimizing for ownership before it actually becomes a problem. A lot of times teams are learning, they’re going to find new opportunities, and you want them to feel supported in pursuing them. Even if they might fall a little bit outside of what they’ve traditionally worked on.

I think it’s actually great if a growth team proposes something that is very, very ambitious and then gets pushback from another team. That means that we’re actually stretching ourselves

You need to set that culture of really encouraging that ambition, encouraging people to not only stay in one or two surfaces but to find opportunities throughout the product. However, also setting up the right systems and framework so that you have those checks and balances when you need them. I think it’s actually great if a growth team proposes something that is very, very ambitious and then gets pushback from another team. That means that we’re actually stretching ourselves and we then get together and discuss the best path forward. We’re proposing ambitious ideas and these are really things that could make a step function improvement in the company’s growth. That’s a great thing!

What’s not great is if the other team feels like “hey, we didn’t even know this feature was going out and we’re surprised by it and we feel like we didn’t have a chance to weigh in on it.” I think just really creating those checks and balances at a process level so that people can be informed but not necessarily discouraging ambitious ideas.

Alon:

When conflicts arise, are there tactics or things that you’ve found to be particularly effective at having those hard conversations with other folks, getting them to understand why this is something that the growth team cares about and why it benefits them?

Wendy:

Yeah—I think putting things behind feature flags, putting things behind experiments, tends to quell a lot of these concerns. We’re going to test it. If we see that this drops one of the metrics that the core team cares about or drops overall engagement, we can always roll back. That usually helps people feel more comfortable. I think another thing is just doing user research. Oftentimes, we might think something is totally off from user expectations, but when users see it they’re actually fine with it or they don’t notice it.

Alon:

Have you found that core product teams have taken any learnings from the situations where growth has stepped in to try to run an experiment or try something out? Any growth practices that you’ve seen socialized in the organization? How do you think about championing growth approaches outside of the core growth org?

Wendy:

I think there’s definitely a lot of room for that collaboration. Core product teams are often launching net new power, net new features into the product and the idea of “how do we disclose this new feature and onboard users into how to use it?” is something that a growth team can definitely collaborate with and help to integrate the feature into the overall onboarding holistic journey. So that’s definitely an area of collaboration.

Pricing and packaging is another area that tends to be very, very collaborative. What features are we putting in our plans? How do we determine what’s in our free tier versus our self-serve tiers versus our enterprise? And really working with the core product team to understand “what type of persona is this feature built for? What are the upsell opportunities that we could build around this feature?” Maybe they have a set number in the free tier and then using that to upsell them all the way up to enterprise.

Definitely easier said than done. I think it’s often difficult to keep track of everything that’s going on within a product development organization.

Really socializing these frameworks so that the collaboration is coming both ways, it’s not just growth going to the core product and being like “hey, we saw that you were launching this,” but the core product team is actually wanting to consult with growth when they launch a new feature because they know it’s going to increase adoption for them, and also it’s going to help the company hit its business goals.

Thank you Wendy Lu!

Alon:

Well, I think we’re getting close to time, so thank you for the time and for chatting with us today! In parting, what’s one surprising thing about you that most people don’t know? It doesn’t need to be growth related!

Wendy:

I’ve recently been playing a lot of pickleball. I moved up to Marin at the beginning of the pandemic and I have a pickleball court around a mile from my house. I’m getting better and better at that as I play weekly. So yeah, if anyone is a pickleball fan, definitely hit me up!

Alon:

Awesome, well thanks again for the time, Wendy! Hope you have a great rest of the day!

Wendy:

Yeah, thanks so much Alon!

You can connect with Wendy on Twitter and Linkedin

Try Switchboard

We started Switchboard to make it easier to build fully integrated experiences that drive activation and engagement (like tailored onboarding) into your product. We provide powerful building blocks to define targeting and journey logic. Our APIs and SDKs give you full control over the UX and fit into your product development workflow, saving valuable dev time.

We’re working with a small group of design partners as we build Switchboard. If you’re creating tailored onboarding and our approach is compelling, get started and sign up for a free account.